80 terabytes of archived web crawl data available for research

Internet Archive crawls and saves web pages and makes them available for viewing through the Wayback Machine because we believe in the importance of archiving digital artifacts for future generations to learn from. In the process, of course, we accumulate a lot of data.

Internet Archive crawls and saves web pages and makes them available for viewing through the Wayback Machine because we believe in the importance of archiving digital artifacts for future generations to learn from. In the process, of course, we accumulate a lot of data.

We are interested in exploring how others might be able to interact with or learn from this content if we make it available in bulk. To that end, we would like to experiment with offering access to one of our crawls from 2011 with about 80 terabytes of WARC files containing captures of about 2.7 billion URIs. The files contain text content and any media that we were able to capture, including images, flash, videos, etc.

What’s in the data set:

- Crawl start date: 09 March, 2011

- Crawl end date: 23 December, 2011

- Number of captures: 2,713,676,341

- Number of unique URLs: 2,273,840,159

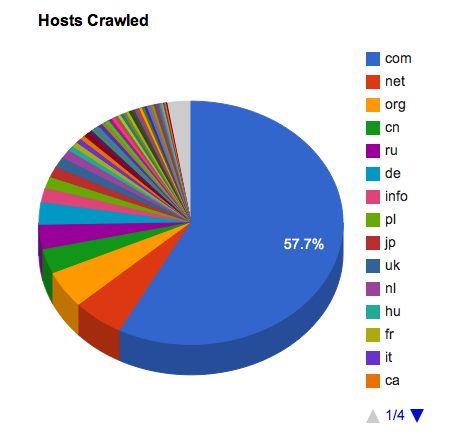

- Number of hosts: 29,032,069

The seed list for this crawl was a list of Alexa’s top 1 million web sites, retrieved close to the crawl start date. We used Heritrix (3.1.1-SNAPSHOT) crawler software and respected robots.txt directives. The scope of the crawl was not limited except for a few manually excluded sites. However this was a somewhat experimental crawl for us, as we were using newly minted software to feed URLs to the crawlers, and we know there were some operational issues with it.

For example, in many cases we may not have crawled all of the embedded and linked objects in a page since the URLs for these resources were added into queues that quickly grew bigger than the intended size of the crawl (and therefore we never got to them). We also included repeated crawls of some Argentinian government sites, so looking at results by country will be somewhat skewed. We have made many changes to how we do these wide crawls since this particular example, but we wanted to make the data available “warts and all” for people to experiment with. We have also done some further analysis of the content.

If you would like access to this set of crawl data, please contact us at info at archive dot org and let us know who you are and what you’re hoping to do with it. We may not be able to say “yes” to all requests, since we’re just figuring out whether this is a good idea, but everyone will be considered.

No comments:

Post a Comment